This has been a wild weekend for the fields of tech policy and AI safety. As a writer I am not normally a news guy, but this moment has felt like kind of a perfect microcosm of both the AI industry and the Trump administration’s flavor of petulant authoritarianism.

The AI company Anthropic — known for their engineering-focused chatbot Claude — was founded by former OpenAI employees who left to form their own company because they weren’t satisfied with OpenAI’s safety standards. Anthropic’s prioritizing of ethics and care have not been a handicap for them; they’ve led to Claude, the best LLM product on the market today. In July 2025 Anthropic was awarded a two-year $200 million contract with the Department of Defense to support AI for use in classified government environments, mirroring similar contracts the government made with other companies. Despite the internal competition with ChatGPT and Llama, Claude was the highest-quality product and the only one approved for use in classified military systems.

But Anthropic’s culture of (relative) corporate responsibility set it up to be the target of a frenzy the Trump people had already worked themselves into: the specter of “woke AI.” The presidential order “Preventing Woke AI in the Federal Government (July 2025)” was an ideological rant typical of Trump’s presidential orders that desperately tries to fill itself with false factual assertions to justify banning LLMs involved in federal workflows from “incorporating concepts” like “DEI”, “intersectionality”, and “transgenderism”.

In January 2026 this cause was taken up by Pete Hegseth, the self-proclaimed “secretary of war” — a fake extra-evil title he gave himself, analogous to if I made people call me “Dr. Destructo”. In a speech he gave at SpaceX, introduced by Elon Musk, he echoed Musk’s talking points about AI needing a right-wing ideological alignment:

Remarks by Secretary of War Pete Hegseth at SpaceX Today I want to clarify what responsible AI means at the Department of War. Gone are the days of equitable AI and other DEI and social justice infusions that constrain and confuse our employment of this technology. Effective immediately, responsible AI at the War Department means objectively truthful AI capabilities employed securely and within the laws governing the activities of the department. We will not employ AI models that won’t allow you to fight wars.

We will judge AI models on this standard alone; factually accurate, mission relevant, without ideological constraints that limit lawful military applications. Department of War AI will not be woke. It will work for us. We’re building war ready weapons and systems, not chatbots for an Ivy League faculty lounge.

Pete Hegseth’s “Accelerating America’s Military AI Dominance” memo furthered this preemptive war against technology that conflicted with MAGA ideals, “as if we were at war:”

Clarifying “Responsible Al” at the DoW - Out with Utopian Idealism, In with Hard-Nosed Realism. Diversity, Equity, and Inclusion and social ideology have no place in the Do W, so we must not employ AI models which incorporate ideological “tuning” that interferes with their ability to provide objectively truthful responses to user prompts. The Department must also utilize models free from usage policy constraints that may limit lawful military applications. Therefore, I direct the CDAO to establish benchmarks for model objectivity as a primary procurement criterion within 90 days, and I direct the Under Secretary of War for Acquisition and Sustainment to incorporate standard “any lawful use” language into any DoW contract through which AI services are procured within 180 days.

The demand to incorporate this new language allowing “any lawful use” into existing contracts was Pete starting a fight. The DoD intended for this to apply retroactively, meaning all existing AI contracts needed to be updated to expand the government’s authority to use the software not according to the agreed-upon scope in the contract and terms, but for “any lawful use.” This obviously doesn’t work because the existing two-year contracts were already approved by both parties with their current language. (It’s not true that “any lawful use” is a standard clause, because the Trump admin already choose not to use it in 2025.) All the current terms of all the existing contracts are terms the government and the firms agreed to with full knowledge. The pentagon already agreed that the existing contract didn’t tie its hands in any way that would prevent them from doing anything they considered critical.

This is a normal situation and gives both parties the stability that contracts are for. There’s no room for a firm to “pull the rug” and change terms of access, but neither is there room for the government to unilaterally change the agreement. It’s the Pentagon who’s trying to break the original contract and unilaterally change the terms, not Anthropic. So now they have to renegotiate, or else the Pentagon needs some excuse to back out of the agreement they made.

And Anthropic has provided Claude to the DoD as a project with a specific scope. The two main “red line” requirements in Anthropic’s Acceptable Use Policy that still applied to government use were that Claude could not be used for mass domestic surveillance or as part of a fully autonomous weapons system.

Anthropic, Statement from Dario Amodei on our discussions with the Department of War (Feb 26, 2026) …in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do. Two such use cases have never been included in our contracts with the Department of War, and we believe they should not be included now:

- Mass domestic surveillance. We support the use of AI for lawful foreign intelligence and counterintelligence missions. But using these systems for mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties. …

- Fully autonomous weapons. … They need to be deployed with proper guardrails, which don’t exist today.

To our knowledge, these two exceptions have not been a barrier to accelerating the adoption and use of our models within our armed forces to date.

Claude isn’t for panopticons and it’s not for killbots. If they wanted one, it’d have to be something different. The AUP doesn’t ban all possible evil use, but it does wall off these areas which are legitimate near-term risks where AI technology could plausibly be abused. The Pentagon now suddenly demands they have access to the system without these extremely basic safety guardrails in the contract language.

Anthropic is normal

While Anthropic is the hero of this particular story for being the most responsible person in the room, I am not here to cheer for it as my favorite team. It’s still a profit-driven company, it’s still contributing to the AI problems, and it even chose to become a defense contractor for the Trump administration. But they’ve been more responsible than their peers.

Nothing makes me angrier than people who don’t have principles and say whatever they have to say to gain power, even when their justifications are contradictory. In contrast to that, Anthropic has been consistently in favor of AI regulation. In 2025 they were the first tech company to endorse the SB 53 AI regulation bill which would mandate extra safety requirements on companies including themselves. They are not trying to grab power. They’re pro-safety and pro-regulation in general as a business strategy, even when that means the government takes power from them.

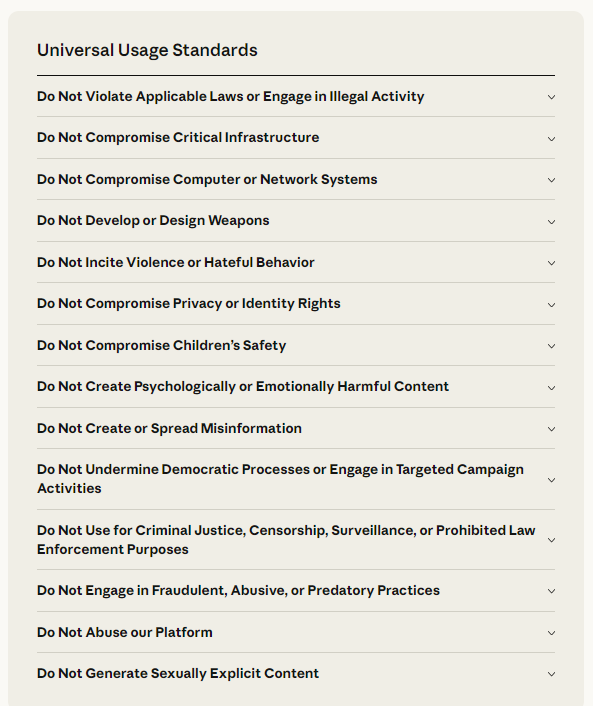

The fact that surveillance and killbots are the only issues left shows how minimal Anthropic’s terms with the government have actually been. They’ve already massively compromised on their ethical principles to work with the military. And I don’t mean in a guilt-by-association way, I mean very literally, Anthropic has already specially modified their policy with the government to permit the military to do almost anything it wants — far more than any commercial offering allows.

In their public Acceptable Use Policy, under “Universal Usage Standards”, Anthropic has a blanket prohibition on developing and designing weapons. In fact, most of their Universal Usage Standards are just a description of what it means to be the federal government in 2026:

These supposedly universal prohibitions do not extend to the US military; Anthropic submitted a bid to use Claude in coordinating autonomous drone swarms. Their only requirement was for a human to be in the loop on kill decisions — an absolutely minimal gesture towards safety made while still selling weapons to a rogue government.

Anthropic has been extremely focused on the design and tuning of Claude, as evident by their detailed constitution outlining what kind of character they want Claude to have. They’re not just trying to play legal games with terms of service, they’re trying to build something that resists certain categories of use at a structural level. But in terms of exercising legal power via contract or terms, they’re doing the bare minimum.

The obvious failure of Anthropic here is that they involved themselves with the defense industry in the first place. Since the original contract Claude has heavily contributed to the most sensitive and controversial military operations. Claude reportedly played a key role in the extrajudicial capture of Nicolás Maduro, and was used again when Trump attacked Iran.

Anthropic CEO Dario Amodei has expressed ongoing concern over potential misuse of AI, and even noted the extralegal federal military engagements in Minnesota as evidence that the core values and principles in his ethical framework (namely, anti-autocracy) are at risk:

Mon Jan 26 17:03:46 +0000 2026I've been working on this essay for a while, and it is mainly about AI and about the future. But given the horror we're seeing in Minnesota, its emphasis on the importance of preserving democratic values and rights at home is particularly relevant.

The threat of military violations seems to concern Anthropic not because they’re woke libs or antiwar hippies but because they’re normal people. And normal people who engage in second-order thinking understand the potential implications of playing a role in what appear to be multiple breaches of US and international law. Trump’s getting away with it, but for how long? And what warmongers will be held responsible along with him? Even for defense contractors, behaving responsibly is a long game it’s important to play.

It’s not just about killer robots and mass surveillance

It’s interesting to think about the imminent threat posed by weaponization of surveillance using AI. Mass surveillance is a very real, very serious issue. Autonomous killing robots are a less imminent threat, but still a serious issue given drone warfare.

It’s easy to see a story here: there are two guardrails and the people being guarded by the guardrails have suddenly become very angry about them, which means they must want to break those particular rules. When people suddenly become willing to pour enormous resources into repealing a law or regulation it’s usually because there’s friction in that particular spot.

Wed Feb 25 01:20:18 +0000 2026Normal guy: “why would AI even be able to kill people?”

Yudkowsky: [Complicated account of deception and instrumental convergence.]

Hegseth: “We will force companies to make unsupervised killer robots, that’s why.”

I don’t think this is the most salient read of the situation. I think the issue is much deeper and much worse.

The authoritarian ethic

I don’t believe this conflict is primarily over any particular term or condition. It’s not driven by the Trump admin wanting to break those terms now, it’s that they object to working with anyone in a relationship that isn’t strictly authoritarian.

Authoritarianism has an internal value system, even though it’s deeply backwards. It places moral weight on power, loyalty, and hierarchy of authority. Anthropic is only showing the thinnest possible pretense of responsibility, but this tiny gesture towards real virtue is objectionable to Trump and Hegseth because it’s a genuine breach of their values. Anthropic’s token gestures towards humanity offend the misanthropes.

Trump and Hegseth are expressing genuine moral outrage at the idea that there could ever be an authority higher than their moment-to-moment whims. The idea of people with rights working with others is disturbing because it implies a system of rights, a world where people have value that isn’t bestowed on them by their betters. These people are genuinely outraged at the idea of having to work with others in any capacity except being served by subordinates.

The Trump admin is defined by a cult of blind loyalty. It’s not looking to do business with private companies for economic prosperity or technical efficacy, it is looking to force tech companies into an alliance with Trump’s fascist political project. And they see the USA as being engaged in a civil war. Against the Democratic Party, against democratic cities, against immigrants, against Antifa, against libs, against woke. To them a dispute is not a business matter, it is a wartime betrayal of allegiance others are expected to uphold, or else be classified as enemies.

The authoritarians fundamentally do not understand mutually beneficial arrangements and they have no sense of fair play. They see the world as a zero-sum game, and if they’re not winning they’re losing. There can be no force above them, so it’s a deep offense for them to be meaningfully bound by law or contract. They replace law with “policy”, unilaterally dictated by powerful executives without oversight, accountability, or recourse. (And the projection is obvious here, as they interpret “policy” as being equivalent to a whim when their enemies have it.)

In the real world, doing business with the government that involves scope-of-use provisions is completely normal. Being concerned about use of force in Minnesota is the duty of every responsible citizen. But growing authoritarianism is marked by a giddiness in demanding people act outside normalcy and making bigger and bigger claims on people.

What the Trump admin is violently objecting to is not tech policy, it is the idea that there could ever be such a thing as a higher standard at all. Not people, not nations, not contracts, not law, not ethics, not God. It is a “god complex” to ever consider checks and balances on the US government, in the eyes of these people who view themselves as the gods. This is a much worse problem than wanting to put Claude in killbots robots today, or wanting to identify political dissidents and execute them like in the Winter Soldier. It’s not any problem because it’s every problem. Seeing yourself as god means there’s no limit to the atrocities you’ll commit.

It is, to quote Eva, the ethos of the unapologetic rapist:

2026-02-27T22:34:37.885Zpeople who are spiritually rapists react to being told "no" the same way in all contexts

They fundamentally don’t want to work with people. They will force themselves onto whatever they want when given the opportunity. They don’t want to negotiate consent. They revel in the power imbalance itself and their ability to exploit it. They want to dominate and for others to submit.

The Trump administration has actually been completely upfront about this

Fri Feb 27 04:53:18 +0000 2026This isn’t about Anthropic or the specific conditions at issue. It’s about the broader premise that technology deeply embedded in our military must be under the exclusive control of our duly elected/appointed leaders. No private company can dictate normative terms of use—which can change and are subject to interpretation—for our most sensitive national security systems. The @DeptofWar obviously can’t trust a system a private company can switch off at any moment.

The conflict has nothing to do with a backdoor that they can “switch off at any moment”; no such risk exists. It’s over the premise that private property exists; that people are able to retain rights even when inconvenient for the government.

Secretary of Defense Pete Hegseth (@SecWar), Feb 27, 2026 This week, Anthropic delivered a master class[sic] in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, @AnthropicAI and its CEO @DarioAmodei , have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

…

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

…

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.In conjunction with the President’s directive for the Federal Government to cease all use of Anthropic’s technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

Nearly every assertion of fact here is untrue. The DoD had no such requirement last summer when they agreed to the current contract and there is no potential for Anthropic to have any kind of veto power over operational decisions.

But the outrage is true and real. They see themselves making a unilateral demand and being told no as a fundamental “betrayal”. It’s “arrogance” because they see it as a lesser defying their betters. Their foundational belief is that it’s always wrong for anyone to say “no” to them. They don’t respect consent and agreement, only subordination. Not immediately violating your core principles when asked reads to them as an issue of dominance, because they see themselves as necessarily dominant. Anything other than immediate submission is strong-arming the Regime, who has every right to compel any behavior they want from anyone at any time.

We see the same position from Trump himself:

President Donald Trump (@realDonaldTrump@truthsocial.net), Feb 27, 2026 THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.

The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!

PRESIDENT DONALD J. TRUMP

As always with Trump we are buffeted by an avalanche of the dumbest lies you’ve ever heard. Anthropic’s terms of service are in no way in conflict with the US Constitution. Anthropic is not “leftist”, it is a defense contractor selling millions of dollars of munitions to the federal government for their discretionary use. They kept doing this after the government used those weapons to commit crimes, and is still bragging about its commitment to giving the US government tools that the government consider extremely valuable. Anthropic contracted to Palantir in 2024. Radical leftists they are not.

But the emotions exactly explain the world Trump wants to lead. The only thing Trump values is loyalty and subservience, and so anything short of that makes you guilty of every evil: woke, radical, leftwing nutjobs. And still, even after pushing every nuclear option, all he wants to do is threaten. You “better get your act together” or I’ll make you. We’ll throw consequences at our enemies and justify them later. The only understanding of the situation is “we, not them”.

There’s this idea that this represents a coup and a fundamental threat to America, which at first seems absurd. But being denied is, in a weird way, a threat to the fundamental power of the government as they see it, because that power is based on fear instead of any form of order.

Nuclear retaliation over moderate behavior

As per the authoritarian ethic, Trump met what he perceived as disloyalty with a disproportionate, nuclear response.

Hegseth has now labeled Anthropic a “supply chain risk”, a designation reserved for foreign companies that might be compromised by enemy governments. This is used to prohibit material from nationalized firms like Huawei from entering anywhere in the “supply chain”. Hegseth is invoking this not to prevent a real risk, but to trigger existing clauses to prevent any federal contractor from using Claude at any point, as if it were so unsafe it could taint the entire lineage of any product. Not only does this deny Anthropic federal funding, it also prevents them from doing business with any businesses that work with the government.

At the same time, they threaten to invoke the Defense Production Act, a Korean War-era statue to nationalize critical industries to supply wartime emergencies. This could allow the government to compel Anthropic to do business in a specific way, but carries the exact opposite semantic designation of a supply chain risk. To invoke the DPA is to argue the product is so valuable and critical to national security that the government has the right to unilaterally seize it.

Fri Feb 27 22:46:02 +0000 2026Nvidia, Amazon, Google will have to divest from Anthropic if Hegseth gets his way. This is simply attempted corporate murder. I could not possibly recommend investing in American AI to any investor; I could not possibly recommend starting an AI company in the United States.

The disproportionate response is intentional. This is attempting to bankrupt Anthropic as a punitive, retaliatory measure.

The administration is trying to make an example out of Anthropic as explicit intimidation of other companies. The goal is to extralegally punish Anthropic so hard for not unconditionally submitting themselves to the whims of the administration that no other AI company would dare show the same “resistance.” These are both outlandish nuclear options and misusing them like this is already criminal.

This is all more consequence-first-justification-later nonsense. They’ve proved as much by the military using Claude to attack Iran after claiming it was a security risk. It’s obviously pretextual (a legal term for “lying”), but it’s a way the government could abuse existing procedures to inflict damage on a political enemy. Which is their whole deal. The hypocrisy is a feature, not a bug.

It’s the same move Trump always does, the same move he’s pulled with tariffs. It’s deeply corrupt use of the government to coerce people with strong-arm economic pressure invented by the executive to make an example out of punishing enemies.

Lawful conduct is a joke

The argument against safeguards is that you don’t need any external safeguards because you can always trust in the good ol’ liberal process to set military policy safely and responsibly through the constitutional system. This is obviously nonsense. The Trump admin has never respected US law, not since day one. They’re thugs.

Prevention — even unenforced prevention that only establishes remedy, like a terms of service document — is perhaps the only defense against this. Take the risk of domestic surveillance: we see the same story continually. Something is secretly deployed, it’s leaked later, and by the time there’s a chance to object it’s considered “national security” and the damage can never be remediated. The courts failed already, and fail again for each tick of the clock.

With the trumpies especially, there is an obvious contempt for due process and any procedure that threatens leaders’ power to execute their personal decisions. They commit crimes faster than enforcement can catch up and it has, unfortunately, worked.

The Trumpist understanding of politics is immediate power at the expense of long-term stability. “As long as our hand is on the immediate means of enforcement, we have the power.”

Miles Taylor, “One step closer to bombing civilians” (2025) …

On the flight home, Stephen Miller — then a senior advisor to the president — sat down across from me and the head of the U.S. Coast Guard. What followed was a conversation I’ll never forget.“Admiral,” Miller asked, “the military has aerial drones, correct?”

“Yes,” the Admiral answered.

“And some of those drones are equipped with missiles, correct?”

“Sure,” the Admiral said, beginning to catch on.

Miller pressed further: “And when a boat full of migrants is in international waters, they aren’t protected by the U.S. Constitution, right?”

The Admiral clarified that while technically true, international law still applied.

“Then tell me why,” Miller said, “can’t we use a Predator drone to obliterate that boat?”

The Admiral, a veteran of military command, was dumbfounded. “Because it would be against international law,” he replied. You can’t kill unarmed civilians just because you want to.

Stephen Miller didn’t appear interested in the legal implications. Indeed, he seemed more interested in whether anyone could stop Trump from committing such acts.

“Admiral,” he concluded, “I don’t think you understand the limitations of international law.”

Perhaps Anthropic has an idea of the limitations of “all lawful use” as adjudicated by the federal government. Their negotiations seem to imply so:

Ross Anderson, “Inside Anthropic’s Killer-Robot Dispute With the Pentagon” The Pentagon had kept trying to leave itself little escape hatches in the agreements that it proposed to Anthropic. It would pledge not to use Anthropic’s AI for mass domestic surveillance or for fully autonomous killing machines, but then qualify those pledges with loophole-y phrases like as appropriate—suggesting that the terms were subject to change, based on the administration’s interpretation of a given situation.

In the eyes of the people with the hands on the levers, “lawful use” doesn’t mean power specifically granted in law by congress, it means… literally whatever makes the lever move. Especially in unaccountable military contexts, the federal government will interpret text to mean whatever it wants.

The NSA’s mass surveillance program was ruled illegal, and in response they continued doing the same things under an exciting new set of definitions. Torture being illegal doesn’t mean the US won’t do it, it means “if it was authorized by the President”, it can’t be considered torture. The act itself comes first, and regardless of what was done it gets justified after the fact however necessary.

Mass surveillance as lawful use of commercial data

Illegal, unconstitutional mass-surveillance is completely compatible with the government’s interpretation of “lawful use”, according to their own reports. Commercially available data collected without a warrant (which the US government has admitted to doing) is more than enough for the kind of detailed surveillance and identification Anthropic is concerned about, and everyone knows it.

Per Mike Masnick, “you get the sense that someone at Anthropic knows how the intel community misleads by using definitions of words that are different than everyone else believes.”

I could spend an enormous amount of time zooming in on any point here going over the exact pathways and mechanisms and doublespeak, but you don’t need that to see the shape of it. The shape is that they’re rapists.

This is widely unpopular with everyone

The good news is this has been wildly unpopular with everyone.

Anthropic hasn’t prevented the DoD from getting AI they can misuse — no one can — but by standing by their principles they’ve forced the issue into public consciousness, which is a win.

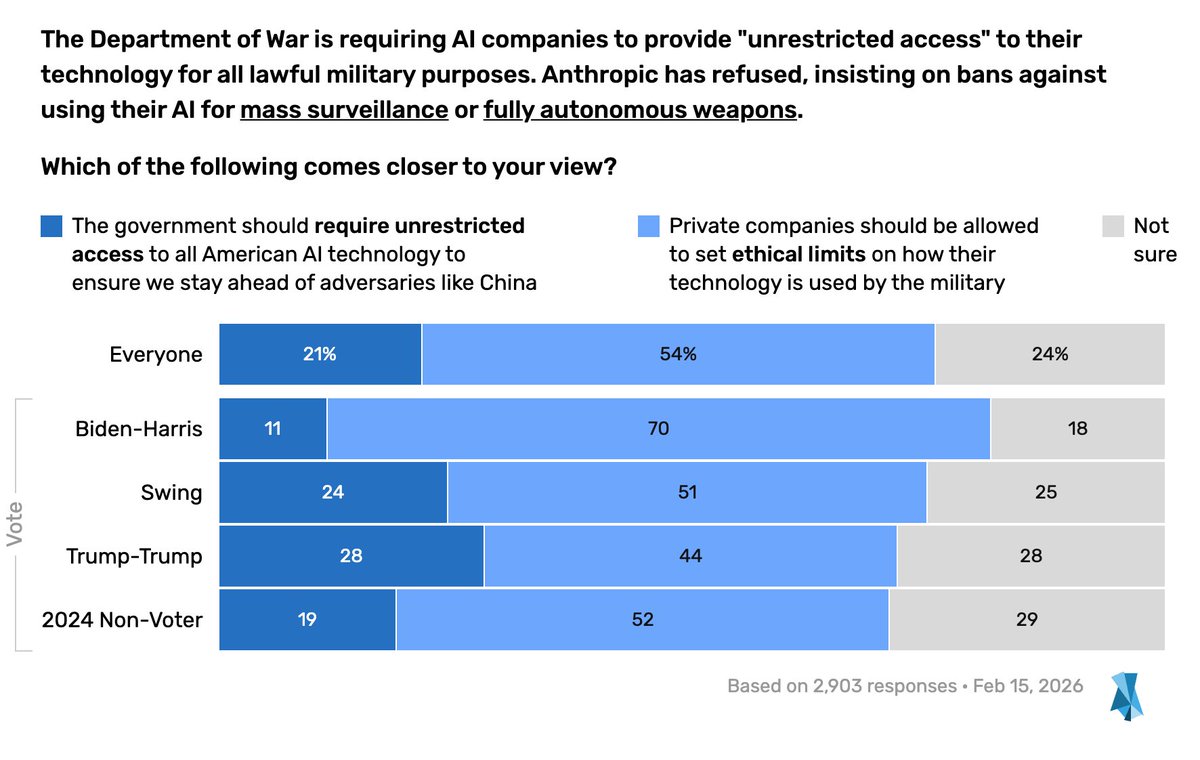

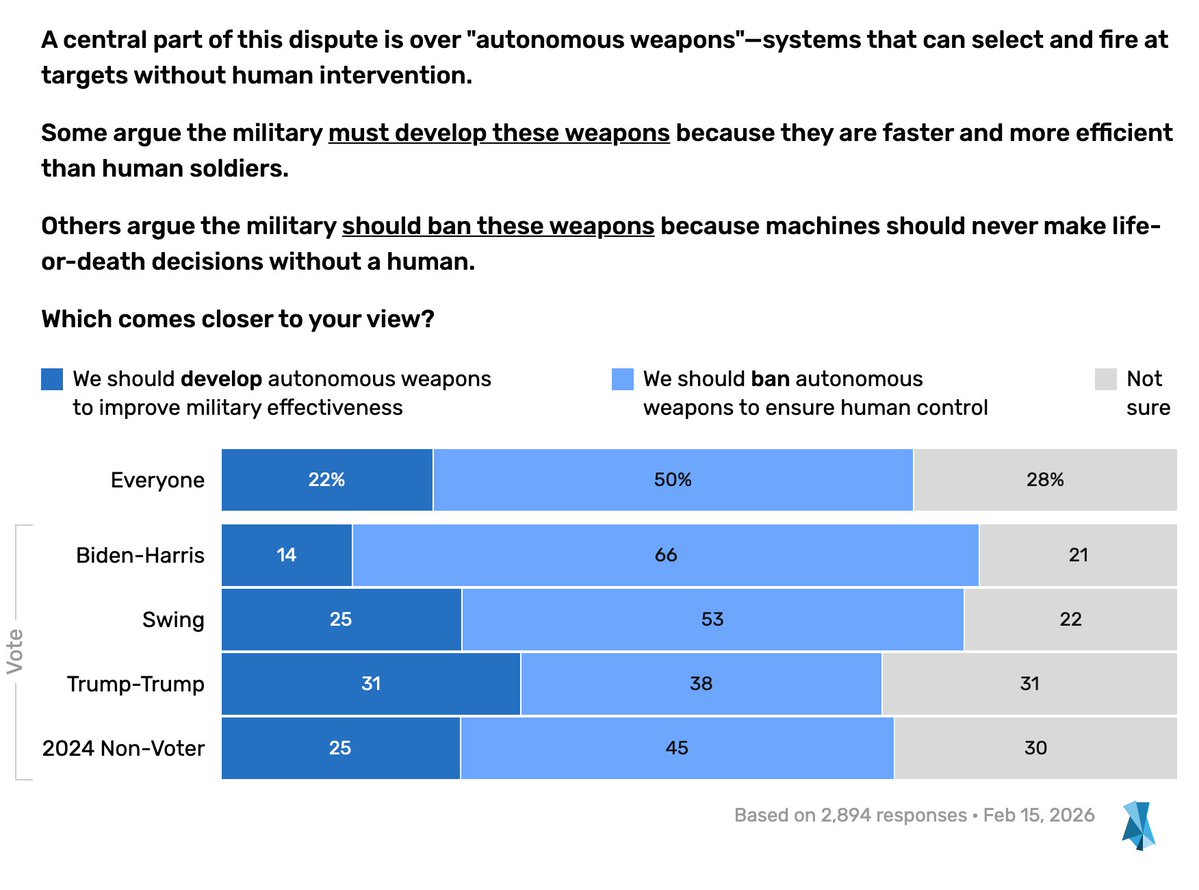

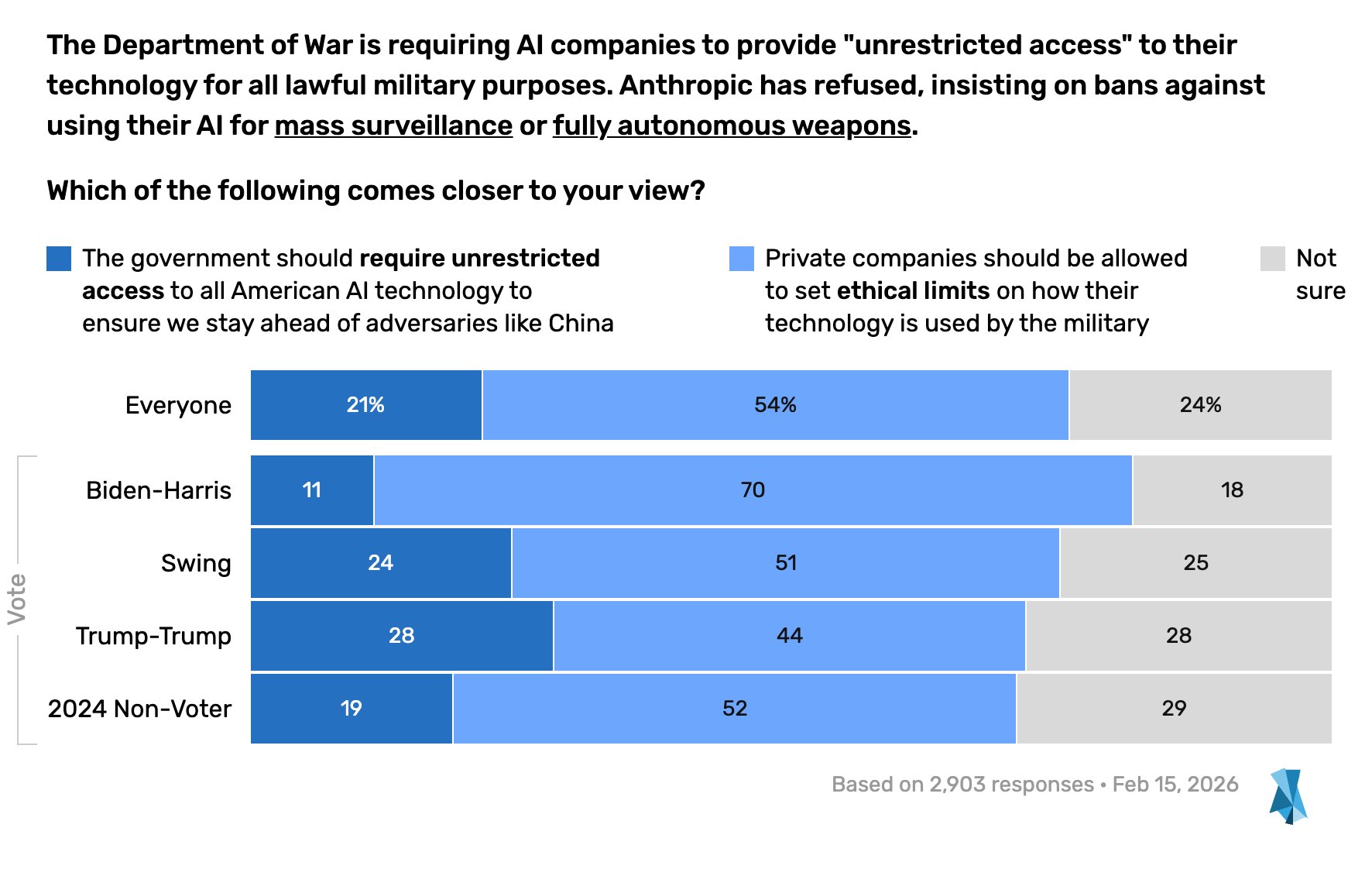

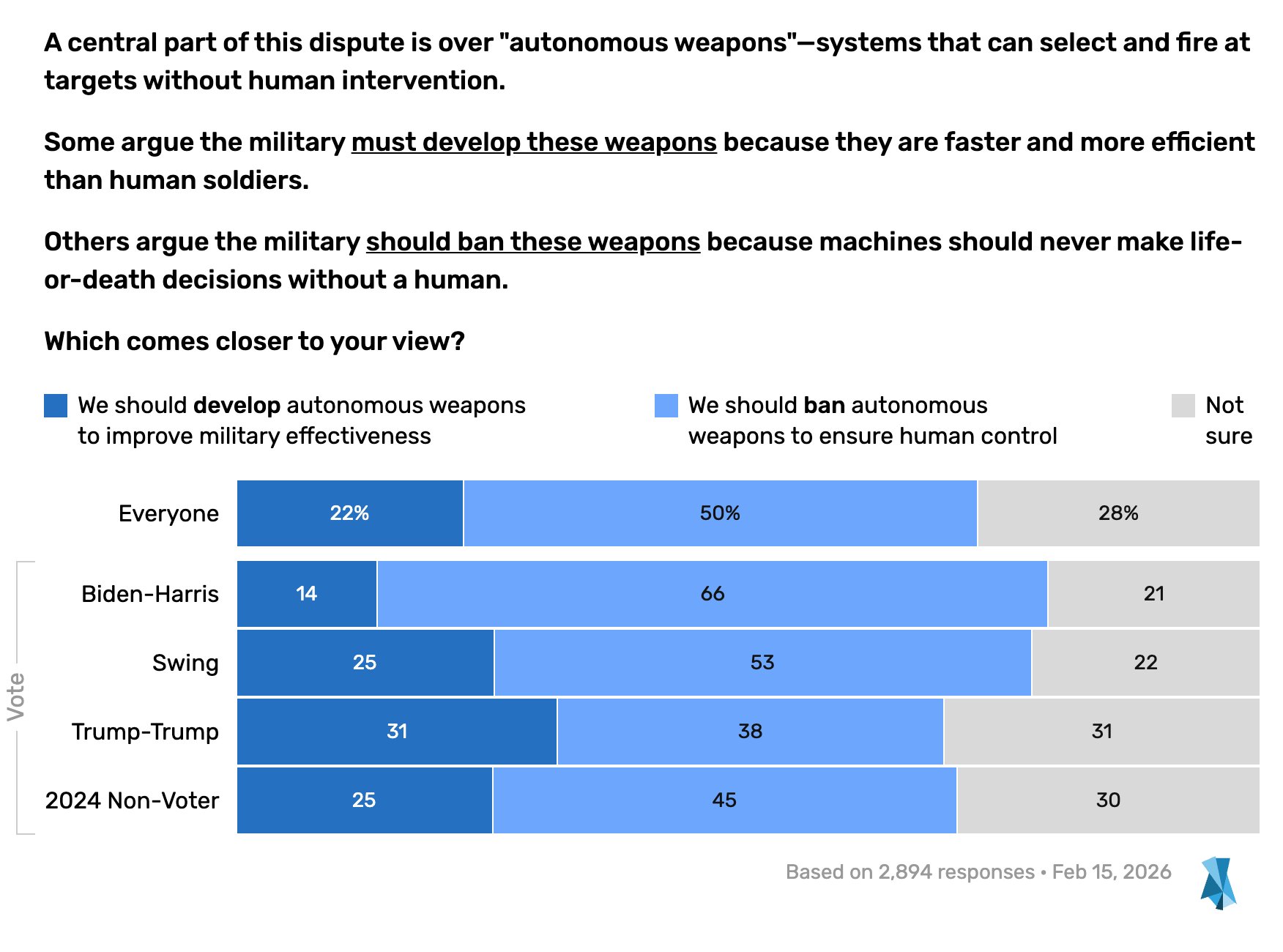

It’s also a win how profoundly hated Hegseth’s behavior has been here. Polling from Blue Rose Research shows that people — including Trump voters — believe companies should be able to set limits with the military, and further that we should ban autonomous weapons outright regardless of vendor:

Tue Feb 24 22:07:21 +0000 2026The public - including Republicans - are overwhelmingly against what @PeteHegseth and the Trump administration are trying to do here.

The people unsurprisingly do not want killer robots and do not trust Trump/Hegseth/the Republican party to do the right thing without limits.

Open letter

Tech workers are standing against this too. In “We Will Not Be Divided”, the employees of OpenAI and Google have signed a joint petition to continue to require these safeguards:

The Department of War is threatening to

- Invoke the Defense Production Act to force Anthropic to serve their model to the military and “tailor its model to the military’s needs”

- Label the company a “supply chain risk”

All in retaliation for Anthropic sticking to their red lines to not allow their models to be used for domestic mass surveillance and autonomously killing people without human oversight.

The Pentagon is negotiating with Google and OpenAI to try to get them to agree to what Anthropic has refused.

They’re trying to divide each company with fear that the other will give in. That strategy only works if none of us know where the others stand. This letter serves to create shared understanding and solidarity in the face of this pressure from the Department of War.

We are the employees of Google and OpenAI, two of the top AI companies in the world.

We hope our leaders will put aside their differences and stand together to continue to refuse the Department of War’s current demands for permission to use our models for domestic mass surveillance and autonomously killing people without human oversight.

Even the crooks who will definitely sell out as soon as they have the chance are paying lip service to the merits here. Like Jeff Dean, lead of Google AI:

Wed Feb 25 07:54:37 +0000 2026Agreed. Mass surveillance violates the Fourth Amendment and has a chilling effect on freedom of expression. Surveillance systems are prone to misuse for political or discriminatory purposes.

And… urgh, and Sam Altman, who talked a big game but immediately sold out and gave the DoD everything they wanted without meaningful conditions.

Sun Mar 01 01:07:05 +0000 2026@QuantumTumbler If we were asked to do something unconstitutional or illegal, we will walk away. Please come visit me in jail if necessary.

“We will not be divided” is cool until you realize scabbing has a paycheck. More on that later, I guess.

Dean Ball

Hey, remember Dean Ball, who wrote that “attempted corporate murder” quote I mentioned earlier? Dean Ball is worth taking another look at; he’s not a random Twitter pull or a fellow critic, he was the Trump Administration’s Senior Policy Advisor for AI and Emerging Tech. He’s the buying-the-euphemism conservative who wrote their AI policy. And in writing this essay I discovered we independently arrived at the same conclusions.

Fri Feb 27 13:05:49 +0000 2026As I have said since the beginning, this is about the principle of the thing for both parties. Anthropic is saying private firms should be able to set the terms on which they offer products and services to the government. USG is saying no, private firms may not set terms of use. In other words, the USG is saying that companies who provide services to the military are not quite “contractors” but instead assets to be deployed at will by the government, only to be constrained by the government’s interpretation of the law. There is no difference in principle from the government saying “we unilaterally dictate the price of every service and product we procure.” After all, price is just another term in a contract. This is why the government’s stance has a certain appeal, but is ultimately conceptually incoherent and a fundamental departure from the principles of ordered liberty that you and I both share.

The “coloring within the lines of our republic” response is for the government to say, fine, we won’t give you business and we will give business to your competitors. Perhaps even to make a public stink about it. I don’t know a single person who objects to that or thinks it’s illegitimate for the government to do.

But instead what the government is doing is trying to destroy Anthropic, using policy measures reserved only for foreign adversaries. This is obviously a different-in-kind response, and all principled classical liberals should reject it outright. This is not hard, or at least it should not be.

In short: You are focusing on the wrong thing. Of course DoW is free to have a principle that they will accept no limitations on their use of technology. The problem is that their policy response is not just doing that, but instead attacking the basic principles of private property: that people have the right to set the terms of their engagement with the government.

Fri Feb 27 00:37:46 +0000 2026@teortaxesTex As far as I know, Anthropic’s contractual limitations on the use of Claude by DoW have not resulted in a single actual obstacle or slowdown to DoW operations. This is a matter of principle on both sides.

He doesn’t call it authoritarian, but he sees the conflict for what it is: a conflict of principles.

He also discusses the disproportionate response:

Fri Feb 27 23:37:06 +0000 2026The U.S. government just essentially announced its intention to impose Iran-level sanctions, or China-level entity listing, on an American company. This is by a profoundly wide margin the most damaging policy move I have ever seen USG try to take (it probably will not succeed).

Fri Feb 27 23:08:30 +0000 2026Think about the power Hegseth is asserting here. He is claiming that the DoD can force all contractors to stop doing business of any kind with arbitrary other companies.

In other words, every operating system vendor, every manufacturer of hardware, every hyperscaler, every type of firm the DoD contracts with—all their services and products can be denied to any economic actor at will by the Secretary of War.

This is obviously a psychotic power grab. It is almost surely illegal, but the message it sends is that the United States Government is a completely unreliable partner for any kind of business. The damage done to our business environment is profound. No amount of deregulatory vibes sent by this administration matters compared to this arson.

Dean Ball, “Clawed - On Anthropic and the Department of War” War Secretary Pete Hegseth has gone even further, saying he would prevent all military contractors from having “any commercial relations” with Anthropic. He almost surely lacks this power… Essentially, the United States Secretary of War announced his intention to commit corporate murder. The fact that his shot is unlikely to be lethal (only very bloody) does not change the message sent to every investor and corporation in America: do business on our terms, or we will end your business.

This strikes at a core principle of the American republic, one that has traditionally been especially dear to conservatives: private property. Suppose, for example, that the military approached Google and said “we would like to purchase individualized worldwide Google search data to do with whatever we want, and if you object, we will designate you a supply chain risk.” I don’t think they are going to do that, but there is no difference in principle between this and the message DoW is sending. There is no such thing as private property. If we need to use it for national security, we simply will. …

This threat will now hover over anyone who does business with the government, not just in the sense that you may be deemed a supply chain risk but also in the sense that any piece of technology you use could be as well.

…

With each passing presidential administration, American policymaking becomes yet more unpredictable, thuggish, arbitrary, and capricious—a gradual descent into madness. It is hard to know at what point ordered liberty itself simply evaporates and we fall into the purely tribal world.

This is another excellent point. I’ve been focused on the authoritarian attitude behind the motivation, but the consequences generalize just as much. This is an attack on free association. It is an attack on the right of people to choose who they do business with and what the terms of that business can be, whether you can negotiate terms with the government and whether you can say no if they show up at your door with demands. It is, like so many other things, the question of whether or not we are ruled by a king. And as always, by Trump’s logic and justifications, we are.

This tells you about the rest of the industry

This kind of nuclear reaction over one company trying to maintain the most basic safeguards you’ve ever heard of tells you everything you need to know about the rest of the AI industry. As long as unstable and violent people like Hegseth who want a free pass to direct, unaccountable, safety-off tech are getting everything they want from OpenAI and co, you know every blip about “AI safety” you hear from them is bullshit.

This incident has provided a clear-cut line to test whether a company is serious about AI safety or not. Research and conferences and stunts mean nothing if you won’t reject this government; a government who is not only out of control and eager to use AI for harm, but is also willing to turn on you at a moment’s notice.

Even if Anthropic’s talk of ethics had been a marketing ploy, it’s deeply shameful that other firms are refusing to match those standards. Worse yet, Anthropic’s virtue — relative to its competitors — broke an otherwise united front of tech companies’ complicity in Trump’s fascism.

And they’re all still complicit, of course. Google reversed policy and is now eager for contracts to bring AI to autonomous weapons systems.

Elon Musk is bringing Grok into classified settings too. The ban on “ideological” training doesn’t apply to Grok, of course, because it’s all bullshit.

Sun Feb 16 13:09:33 +0000 2025Grok 3 is so based 😂

And then there’s OpenAI, a company who keeps removing references to safety from its mission statement, the company whose safety practice was so unconscionable it sparked the creation of Anthropic in the first place. OpenAI threw themselves at the DoD the day of the Anthropic retaliation.

Sat Feb 28 02:56:18 +0000 2026Tonight, we reached an agreement with the Department of War to deploy our models in their classified network.

In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome.

AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.

We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only.

We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements.

We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

It’s truly shameless scabbing. It’s a show of fealty, an eagerness to break ranks with safety and cuddle up to a reckless and dangerous military.

Bonus: OpenAI and their exciting new tech, lying

There’s a lot to say about OpenAI’s agreement. Despite doublespeak and naive, unsecured promises from Sam Altman, the new agreement gives the government everything they wanted.

Sam Altman has Poster’s Disease, so we got to see him talk way too much about this in real time.

Admitting to the performative loyalty-signaling aspect:

Sun Mar 01 00:40:16 +0000 2026@sama @Jack_Raines Why move forward with the DoW agreement now, after months of more cautious talks, and how confident are you that the technical / policy safeguards will hold up in a real high stakes military setting?

Sun Mar 01 01:17:21 +0000 2026@mreiffy @Jack_Raines The main reason for the rush was an attempt to de-escalate matters at a time when it felt like things could get extremely hot.

I am confident in our team's ability to build a safe system with all of their tools--including policy and legal matters, but also many technical layers.

and, either naively or lyingly, signaling confidence in the safeguards that were not present:

Sun Mar 01 01:05:57 +0000 2026@sama Will you turn off the tool if they violate the rules?

Sun Mar 01 01:11:06 +0000 2026@mcbyrne Yes, we will turn it off in that very unlikely event, but we believe the U.S. government is an institution that does its best to follow law and policy.

What we won't do is turn it off because we disagree with a particular (legal military) decision. We trust their authority.

Sat Feb 28 20:51:39 +0000 2026The intelligence law section of this is very persuasive if you don’t realize that every bad intelligence scandal in the last 30 years had a legal memo saying it complied with those authorities

2026-03-01T04:38:17.736ZI saw some folks asking what the difference was between what OpenAI signed with the DoD and what Anthropic said they wanted, and Sam more or less admits here the key point: OpenAI's deal requires them to trust the NSA. Anthropic's contract had real safeguards.

2026-03-01T04:38:17.737ZThe deal Sam signed is the kind of deal someone who doesn't know how the NSA lies by telling you what you want to hear, but then secretly changing their definition of the plain English words in the contract.

www.techdirt.com/2011/05/26/s...

Maybe they’re fascist collaborators, or maybe they’re just conveniently naive. Maybe they just don’t understand the limitations of international law.

Don’t get in bed with tyrants

This whole situation is a microcosm of authoritarianism. Demands for fealty, treating normal business as betrayal, moral outrage over safeguards for things they have no business objecting to. They reference laws and procedures as justifications but they don’t care about any of that. They’re playing calvinball.

Anthropic is in this position because it struck a bargain with the devil. They knew what Trump was; they had all the same information I have. They’ve been messing where they shouldn’t have been messing. They gambled where they shouldn’t have been playing and they lost where they ought not to have bet.

There’s at least some benefit how badly this exposes the government as crooked, not that we needed more evidence there. They’re going to continue to use AI for military purposes and those purposes are going to continue to be horrible, but this incident shows how vile they are even to people who should be their partners.

But this is also the toxin at the core of fascism. Nothing is ever enough. Fascists lash out at their allies and reveal that they can’t be respected. Companies might profit at first but as authoritarianism chips away at the systems that make them money they’ll realize it’s a losing game, because it always has been. There is no appeasing it. It’s a destruction spiral for anything that gets caught in it, and it ultimately has to be destroyed for anyone to be safe, ever. Fascism only ever escalates with be more and more extreme loyalty tests until no one is left. A forever purity war.

Related Reading

- Matthew Cantor, “The Pentagon says it’s ‘lethalitymaxxing’. Why has ‘incel’ slang crossed into the mainstream?”

- Parker Molloy, “With Us or Against Us, Again”

Fri Feb 27 05:54:10 +0000 2026I like this new build-it-yourself approach to propaganda. "First have a strong emotional response. I don't know what upsets you but you can probably think of something. Got it? Ok, now associate that with this unrelated thing I bring up"

IKEA Goebbels

Fri Feb 27 17:31:58 +0000 2026@pavedwalden I like how the nightmare doesn't really get referenced again. like he doesn't even say the claude constitution contains your worst nightmare, or will make it real. it's just like "make sure you're in a scared mood before I tell you this information"

2026-02-28T16:10:53.004ZI really do think that as much as laziness, it's a function of having their brains fully cooked by far right-captured social media, primarily Vichy Twitter.

The Trump regime really thinks that what resonates there resonates with voters. They don't clock it as an artificial echo chamber.

politics

politics