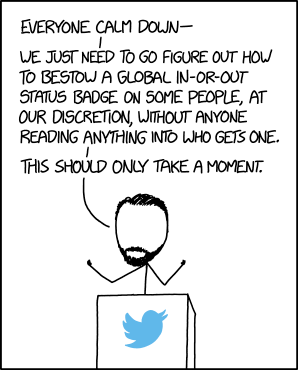

Remember when Elon Musk was trying to weasel out of overpaying for Twitter? During this very specific May 2022-Jul 2022 period, there was a very artificial discourse manufactured over the problem of “fake accounts” on Twitter.

The reason it was being brought up was very stupid, but the topic stuck with me, because it’s deeply interesting in a way that the conversation at the time never really addressed.

So this is a ramble on it. I think this is all really worth thinking about, just don’t get your hopes up that it’s building to a carefully-constructed conclusion. ;)

Argument is stupid

First, to be clear, what was actually being argued at the time was exceedingly stupid. I’m not giving that any credit.

After committing to significantly overpay to purchase Twitter with no requirements that they do due diligence (yes, really!) Elon Musk tried to call off the deal.

Thu Apr 21 18:53:55 +0000 2022If our twitter bid succeeds, we will defeat the spam bots or die trying!

1651594704000That is why we must clear out bots, spam & scams. Is something actually public opinion or just someone operating 100k fake accounts? Right now, you can’t tell.

And algorithms must be open source, with any human intervention clearly identified.

Then, trust will be deserved.

1652435078000Twitter deal temporarily on hold pending details supporting calculation that spam/fake accounts do indeed represent less than 5% of usershttps://www.reuters.com/technology/twitter-estimates-spam-fake-accounts-represent-less-than-5-users-filing-2022-05-02/ …

This was a pretty transparent attempt to get out of the purchase agreement after manipulating the price, and it was correctly and widely reported as such.

Scott Nover, “Inside Elon Musk’s legal strategy for ditching his Twitter deal”

Elon Musk has buyer’s remorse. On April 25, the billionaire Tesla and SpaceX CEO agreed to buy Twitter for $44 billion, but since then the stock market has tanked. Twitter agreed to sell to Musk at $54.20 per share, a 38% premium at the time; today it’s trading around $40.

That’s probably the real reason Musk is spending so much time talking about bots.

I don’t want to get too bogged down in the details of why Elon was using this tactic, but fortunately other people wrote pages and pages about it, so I don’t have to.

Fake Twitter accounts

Fake Twitter accounts